The Illusion of “Having GRC Covered”: Why AI Is Exposing the Execution Gap in Modern GRC

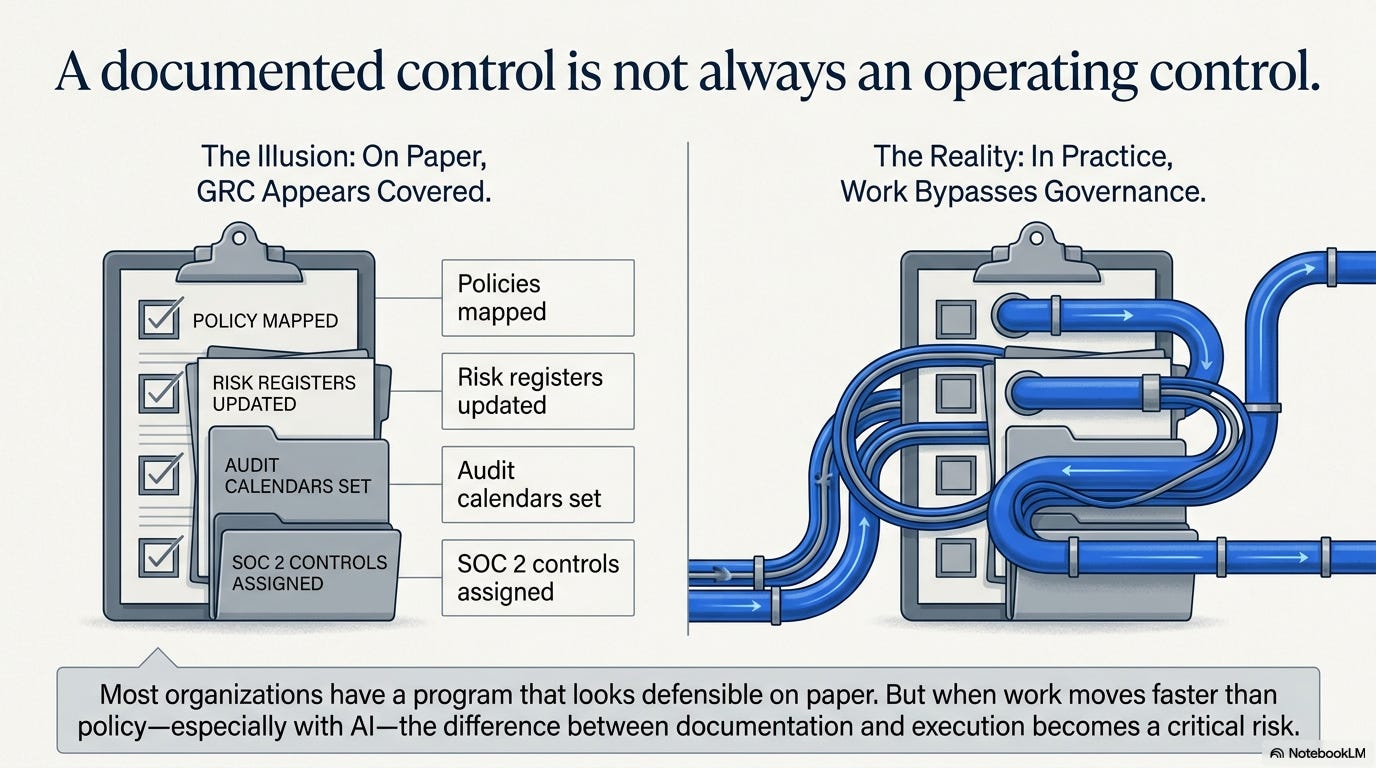

Most organizations can point to something that looks like a GRC program.

There are policies. Framework mappings. Risk registers. Control matrices. Audit calendars. Evidence folders. Vendor assessments. Access reviews. On paper, the program appears structured, defensible, and mature.

But anyone who has worked inside a real GRC environment knows the harder truth:

A documented control is not always an operating control.

That difference matters more now than it ever has.

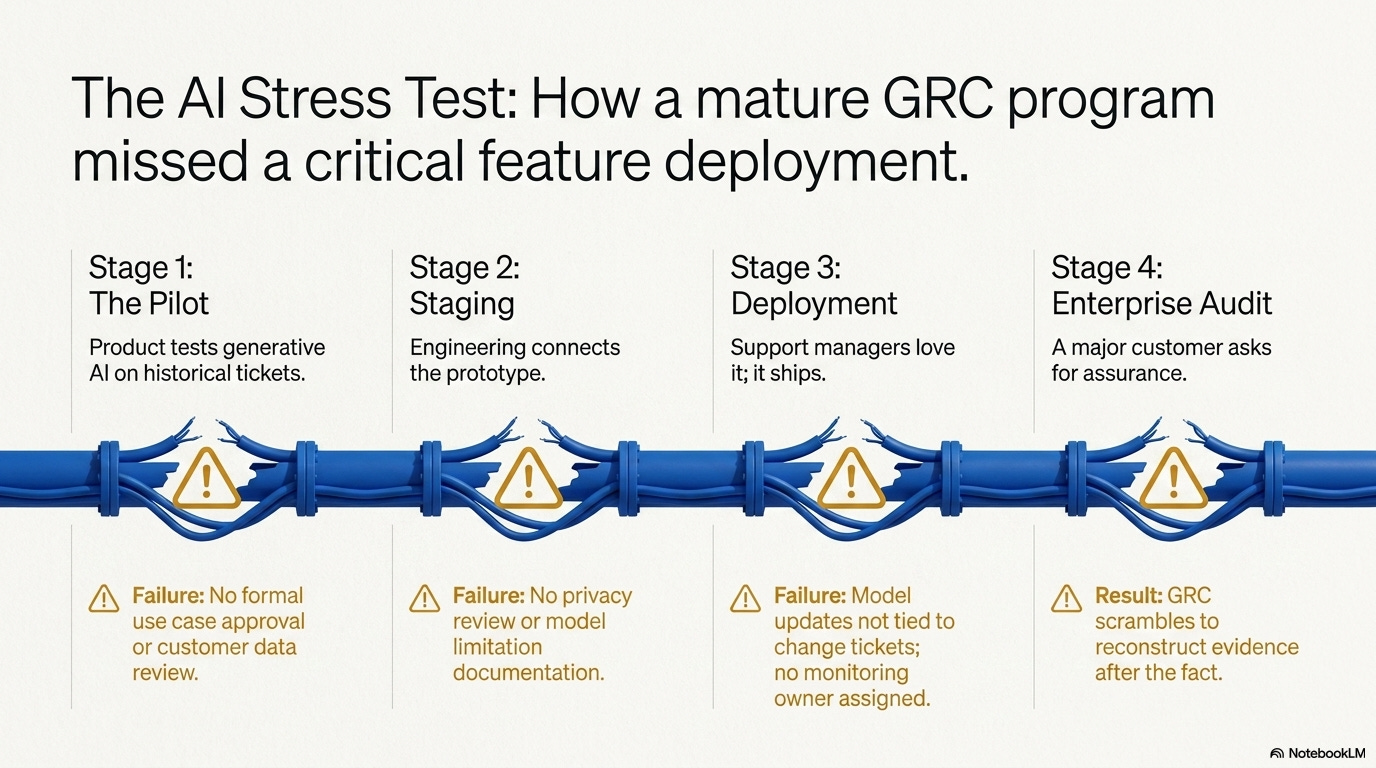

Consider a realistic scenario.

A mid-sized SaaS company provides a customer support platform used by healthcare, financial services, and technology clients. The company has SOC 2 controls, change management policies, risk review procedures, vendor assessments, access reviews, and control owners assigned inside its GRC platform.

From a leadership perspective, GRC appears covered.

Then the product team begins integrating AI into the platform.

The new feature is designed to summarize customer tickets, suggest response language to support agents, and identify recurring customer issues. The business case is strong. The feature could improve response times, support customer experience, and give enterprise clients better operational insight.

At first, it begins as a small internal pilot.

A product manager wants to test the idea. The data science team builds a prototype using historical support ticket data. Engineering connects it to a staging environment. Support leaders test it and like the results.

No one believes they are bypassing governance.

Product sees an enhancement. Engineering sees a feature update. Data science sees experimentation. Support sees efficiency. Leadership sees innovation.

But when GRC is brought in later, the questions start to surface:

Was the AI use case formally approved before development?

Was customer data reviewed before being used for testing?

Were model limitations documented?

Was human oversight defined?

Were validation results retained?

Were model changes tied to change tickets?

Who owns post-deployment monitoring?

Can the company prove the control worked without reconstructing evidence after the fact?

This is where the illusion of “having GRC covered” starts to break down.

The organization had a change management policy. It had a risk process. It had security procedures. It had audit evidence for other controls. But its governance process had not been fully translated into how AI work was actually happening inside the business.

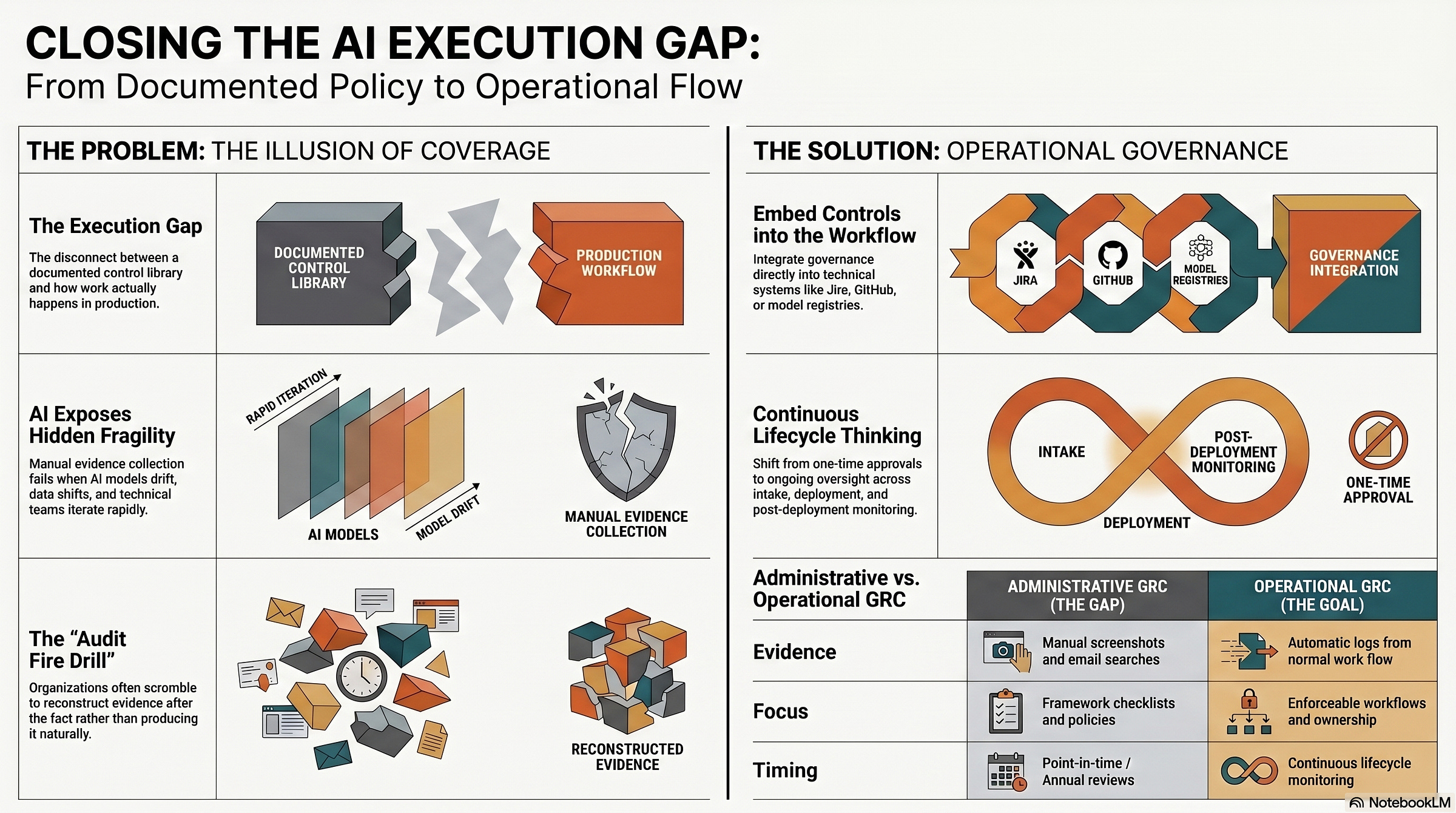

That is the execution gap.

For years, many organizations have managed the gap between policy and practice through manual effort. The policy says one thing. The process works slightly differently. Evidence exists somewhere, but it has to be pulled together before the audit. Control ownership is assigned, but not always clear when something breaks. Approvals happen, but not always in a system of record.

That approach may have been inefficient but manageable in slower environments.

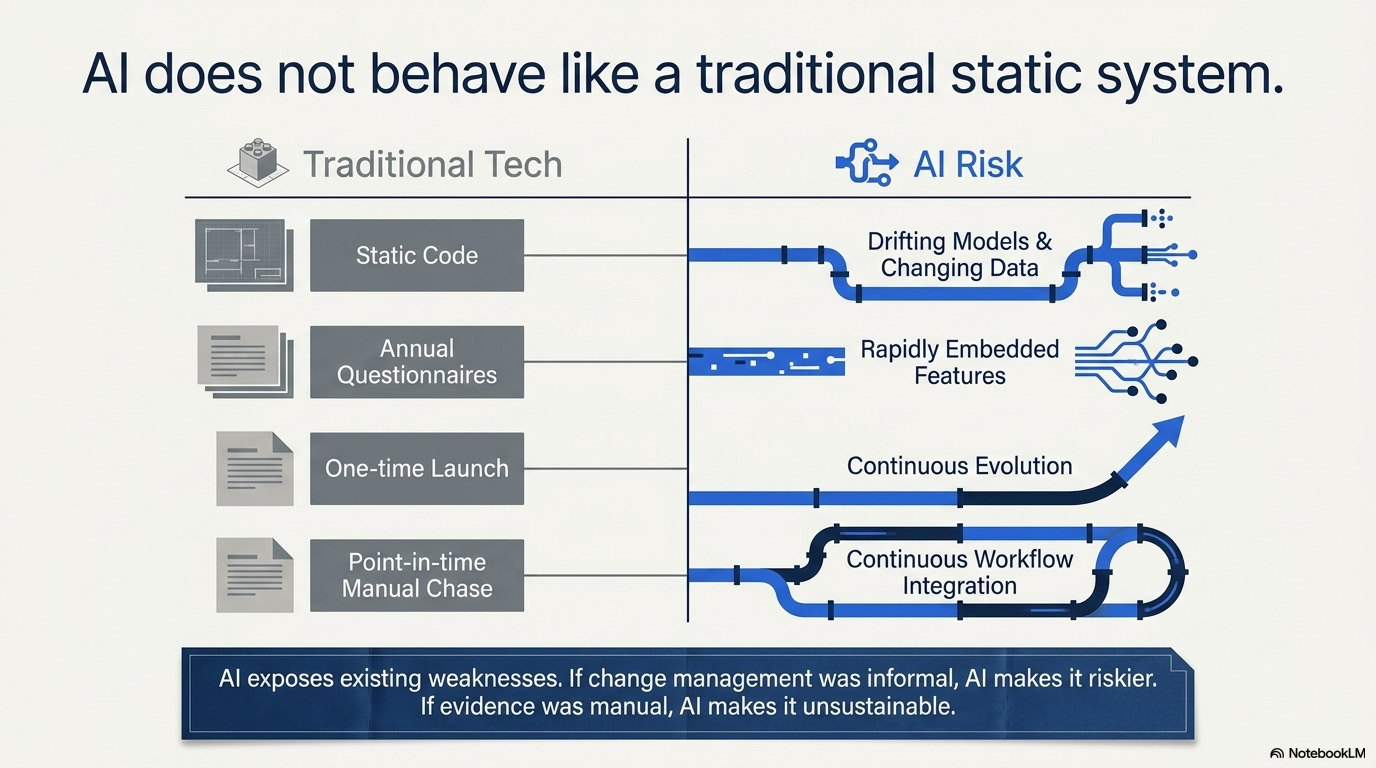

AI makes it harder to sustain.

AI does not behave like a traditional static system. Models change. Data changes. Outputs drift. Business teams adopt tools quickly. Vendors add AI features into platforms organizations already use. Technical teams iterate fast, and GRC teams are often asked to prove control after the fact.

That creates a serious challenge for GRC professionals, cybersecurity leaders, CISOs, technology managers, auditors, and newer practitioners trying to understand how modern governance actually works.

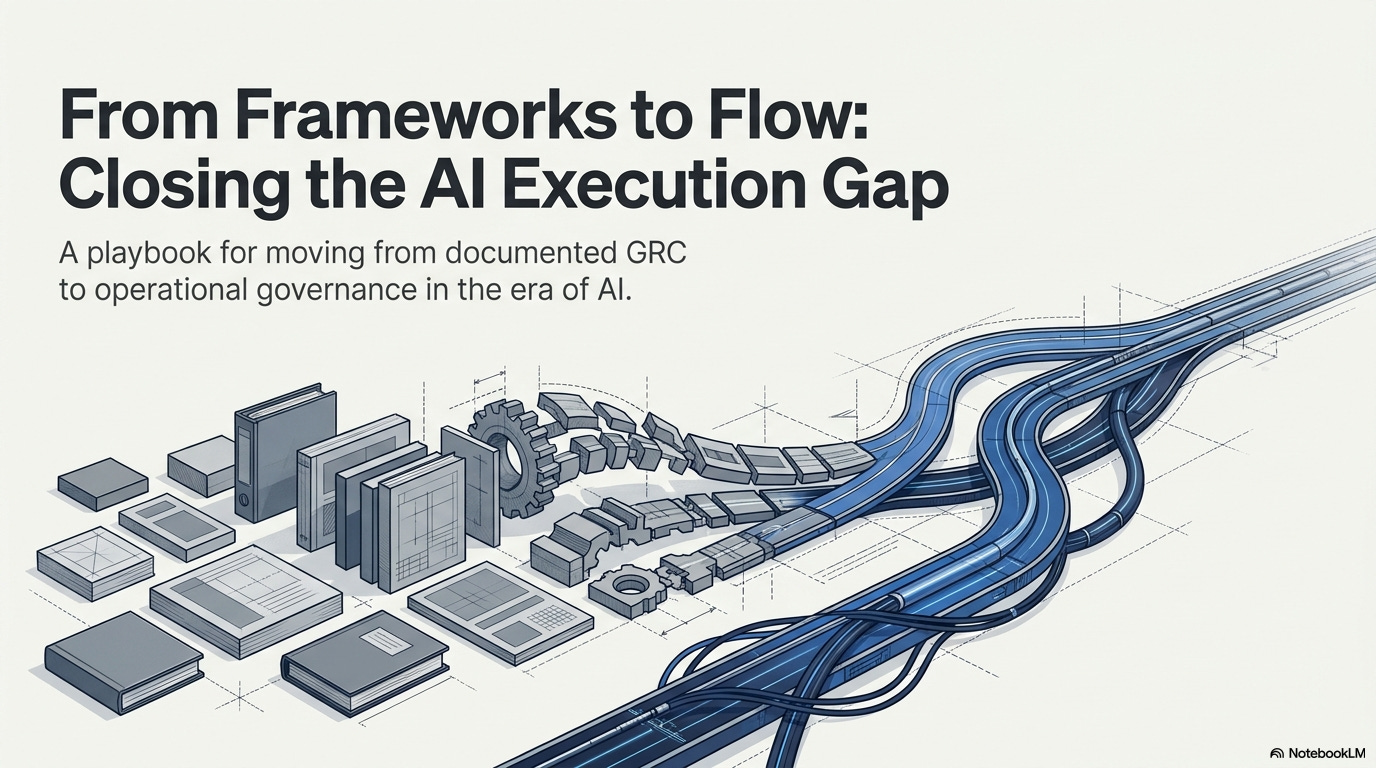

The future of GRC is not just about knowing the frameworks.

It is about making sure controls operate where risk actually happens.

This article looks at the execution gap behind modern GRC, why AI makes it more urgent, and how organizations can move from documented governance to operational governance — where ownership is clear, workflows are enforceable, evidence is built into the process, and controls can be proven.

It also walks through a practical use case showing how a SaaS company with a mature-looking GRC program discovered that its AI governance controls were not fully operating in the real workflow — and how the organization corrected the problem by turning policy into process.

GRC PROS is built for that kind of practical work. We focus on helping GRC professionals at every level move beyond checkbox compliance and understand how governance, risk, and compliance operate in real environments.

This post is paywall free and available to all readers for the next ten days after publishing. For more practical GRC strategy, frameworks, and real-world guidance, subscribe to the GRC PROS Blog on Substack:

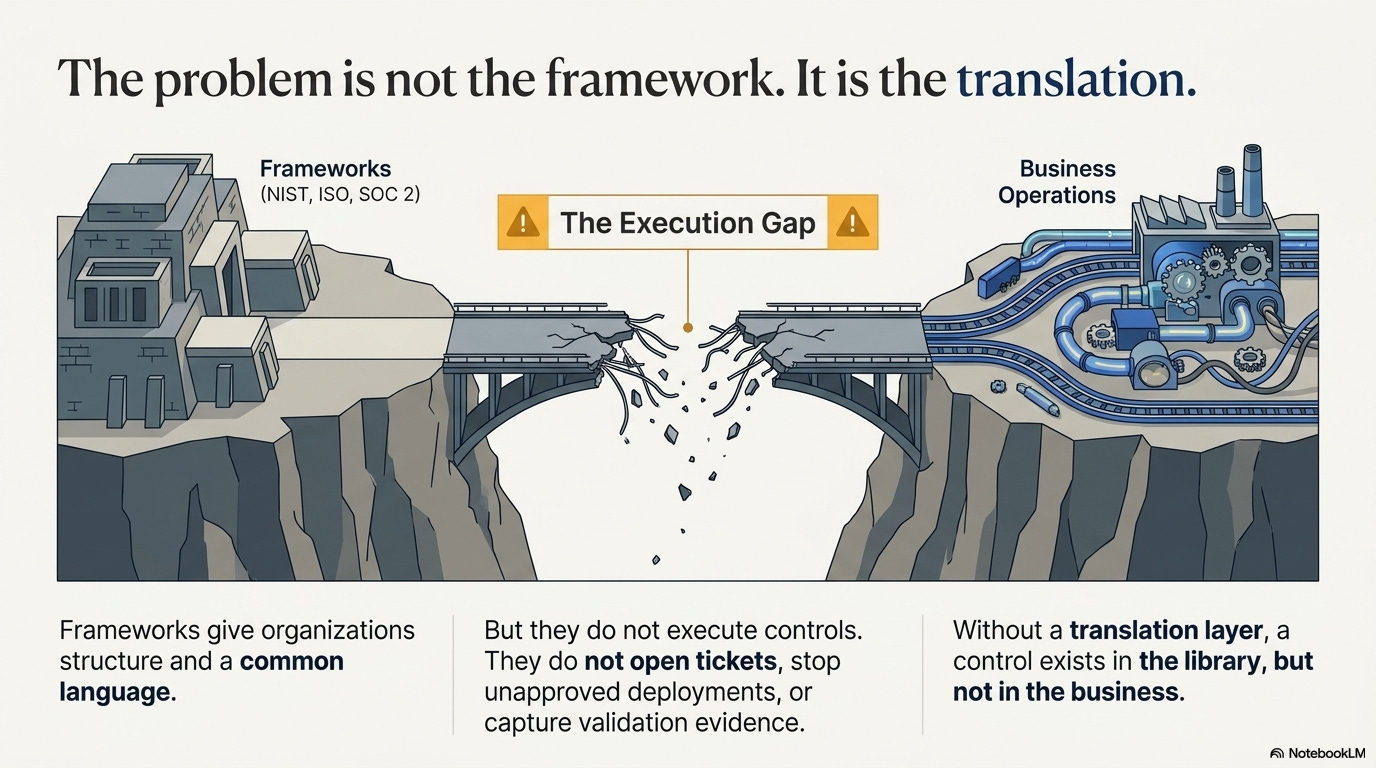

The Problem Is Not the Framework. It Is the Translation.

Frameworks matter.

NIST, ISO, SOC 2, and other standards give organizations structure. They help define expectations, organize risk, communicate control requirements, and create a common language between security, compliance, audit, legal, technology, and leadership.

But frameworks do not execute controls.

They do not open tickets. They do not stop an unapproved deployment. They do not confirm whether a model validation happened. They do not make sure evidence was retained. They do not assign accountability when a control fails.

That work happens inside the organization.

This is where the real GRC challenge begins.

A framework may tell you that change management, access control, monitoring, risk assessment, or third-party oversight is required. But the organization still has to translate that requirement into a process people can actually follow.

That translation has to answer practical questions:

When is the control triggered?

Who owns the decision?

Where does the approval happen?

What evidence is created?

What happens if the process is skipped?

How do we know the control worked?

Without that translation, a control may exist in the control library but not in the business.

That is the execution gap.

And AI makes the gap more visible because AI changes quickly, touches multiple teams, and creates risks that are harder to govern through static documentation alone.

A policy may say that AI-related changes must be reviewed before deployment. But if model updates happen inside a data science workflow that GRC cannot see, the control is already weakened.

A standard may require risk management and monitoring. But if no one owns post-deployment model drift, the monitoring requirement is only partially real.

A procedure may require approval before using an AI-enabled vendor tool. But if business teams adopt features already embedded in existing software, the review process may never be triggered.

The issue is not that the framework is wrong.

The issue is that the framework has not been operationalized.

Where the Execution Gap Shows Up

The execution gap rarely appears all at once.

It usually shows up in small, familiar ways.

An approval happens in email instead of a workflow system. A risk review is discussed in a meeting but not documented. A model version is updated but not tied to a change record. A vendor adds AI functionality, but the third-party risk process does not revisit the use case. A team assumes someone else is monitoring performance, but no formal owner exists.

Each issue may seem manageable on its own.

Together, they create a governance problem.

Consider a common control requirement:

System, application, and model changes must be reviewed, approved, tested, and documented before deployment.

That statement sounds clear. Most organizations would agree with it. It also maps well to many security and compliance expectations.

But when you look at how work actually happens, the picture is often more complicated.

Data science teams may iterate rapidly because experimentation is part of their work. Engineering teams may be focused on release timelines. Business leaders may want AI-enabled capabilities deployed quickly. Security and compliance may be brought in late. Validation evidence may exist, but not in one place. Risk acceptance may be understood informally, but not documented in a way that can be reviewed later.

Then an auditor, regulator, customer, or internal risk committee asks a simple question:

Show me evidence that all material model changes were reviewed and approved before deployment.

That is when the difference between documentation and execution becomes obvious.

The organization may have a policy.

It may have a control.

It may even have teams that generally follow the right process.

But if it cannot show the approval trail, validation evidence, version history, risk review, and deployment linkage without scrambling, the control is not operating at the level the organization thinks it is.

This is not just an audit inconvenience.

It is a maturity issue.

AI Turns Weak Execution Into Visible Risk

AI does not create every governance problem from scratch.

In many cases, it exposes weaknesses that were already there.

If change management was informal, AI makes that informality riskier.

If evidence collection was manual, AI makes that process harder to sustain.

If control ownership was unclear, AI adds more teams and more ambiguity.

If monitoring was periodic, AI creates a need for ongoing review.

If vendor risk management was built around annual questionnaires, AI challenges whether that process can keep up with embedded AI features, changing model behavior, and evolving data use.

That is why AI governance cannot be treated as a simple policy add-on.

It requires organizations to revisit how controls actually operate across the lifecycle of AI use.

This includes questions such as:

Was the AI use case approved for its intended purpose?

Was data use reviewed?

Was the model or tool tested before deployment?

Were limitations documented?

Were human oversight expectations defined?

Is there monitoring after deployment?

Who owns review when outputs drift or performance changes?

Can the organization produce evidence without reconstructing it after the fact?

These questions are not only technical.

They are GRC questions.

They sit at the intersection of governance, risk, compliance, cybersecurity, privacy, legal, audit, technology, and business ownership.

That is why GRC teams need to move beyond being the group that asks for documentation after the fact.

Modern GRC has to help design the operating model before the risk becomes unmanageable.

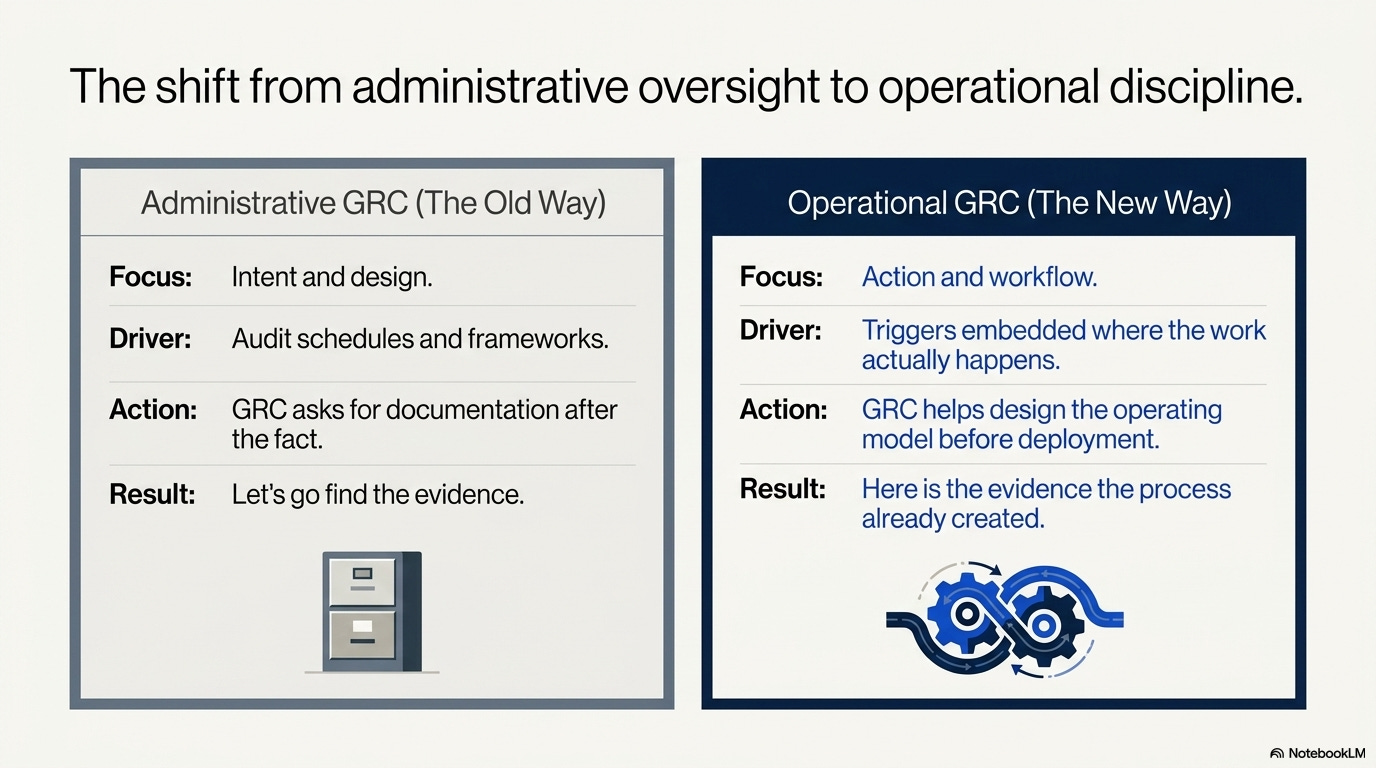

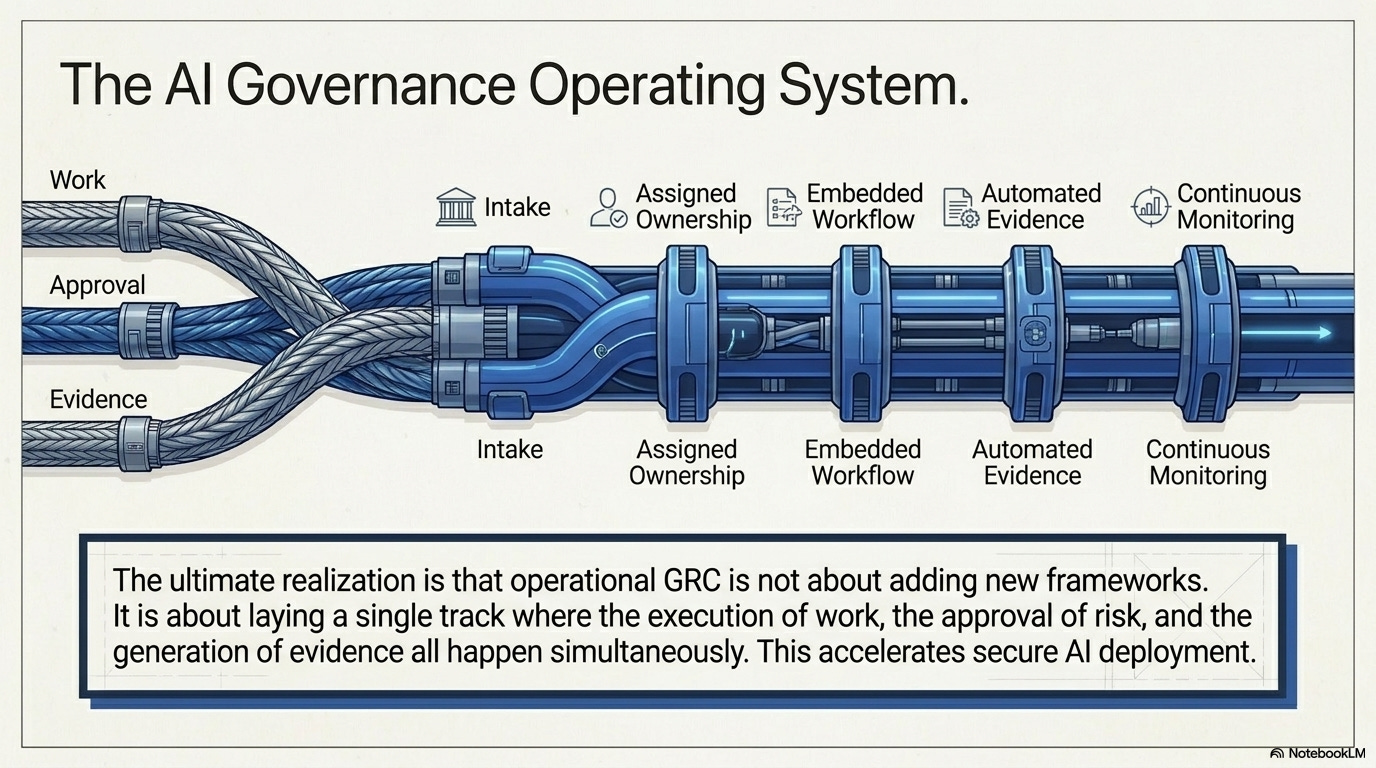

Operational GRC Starts Where the Work Actually Happens

A mature GRC program does not only ask whether a policy exists.

It asks whether the policy has been translated into the systems, workflows, roles, and evidence needed to make the control real.

That is the difference between administrative GRC and operational GRC.

Administrative GRC can tell you what the organization intended to do.

Operational GRC can show you what actually happened.

For AI model change management, that distinction matters.

A weak control says:

AI model changes must be approved before deployment.

A stronger operating model defines what that means in practice.

It identifies what counts as a material model change. It defines who submits the change. It requires validation results, risk impact, version details, and approval records. It establishes who must review the change before deployment. It connects approval to release activity. It captures evidence in a system of record. It defines what happens when an exception is needed.

The control becomes real because it has a place to operate.

This is where GRC can provide practical value.

GRC does not need to own every technical process. That would be unrealistic and ineffective. But GRC should help ensure that critical risks are translated into operating requirements that technical, business, and risk teams can execute consistently.

That means working with the business to understand intended use.

It means working with technology teams to understand where controls can be embedded.

It means working with security and privacy teams to identify review requirements.

It means working with audit to understand what evidence will be needed.

It means working with leadership to make sure tradeoffs are visible.

This is the work that separates a documented program from a functioning one.

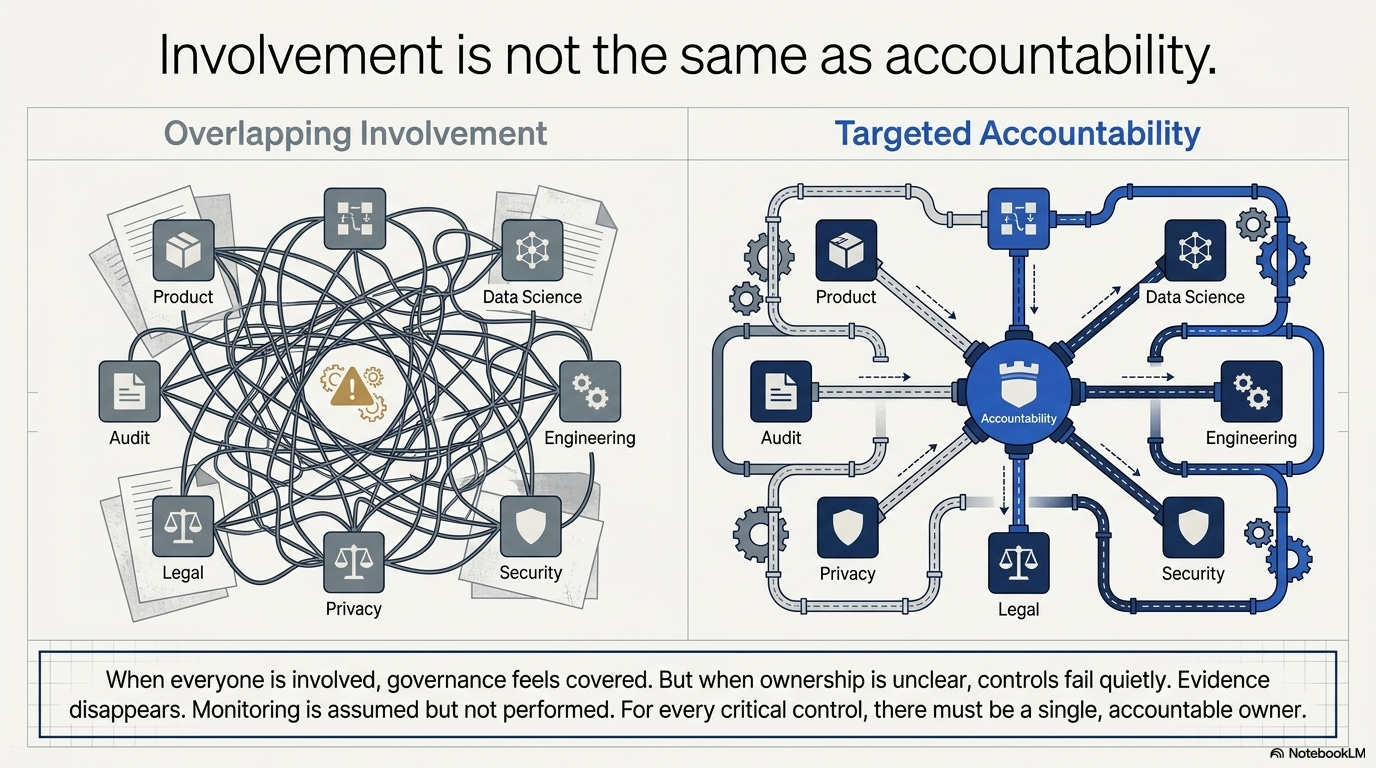

Ownership Is Where Many Controls Break Down

One of the biggest reasons GRC controls fail is not lack of effort. It is unclear ownership.

AI makes this problem worse because AI governance rarely belongs to one team. Data science may own model development. Engineering may own deployment. Security may own technical risk. Privacy may own data use. Legal may own regulatory exposure. Compliance may own control requirements. The business may own the use case. Audit may test the process later.

When all of those teams are “involved,” it can feel like governance is covered.

But involvement is not the same as accountability.

For every critical control, there needs to be a clear accountable owner. That owner does not have to perform every task, but they are responsible for making sure the control operates, evidence is retained, exceptions are reviewed, and failures are escalated. Supporting roles also need to be clear.

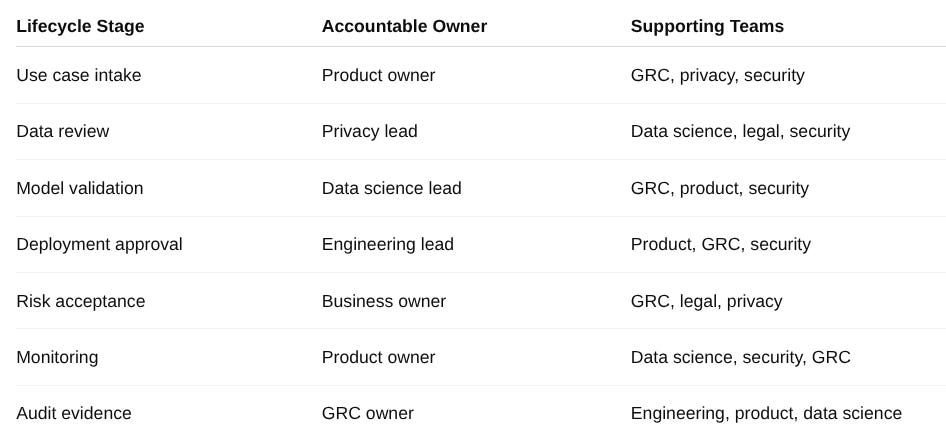

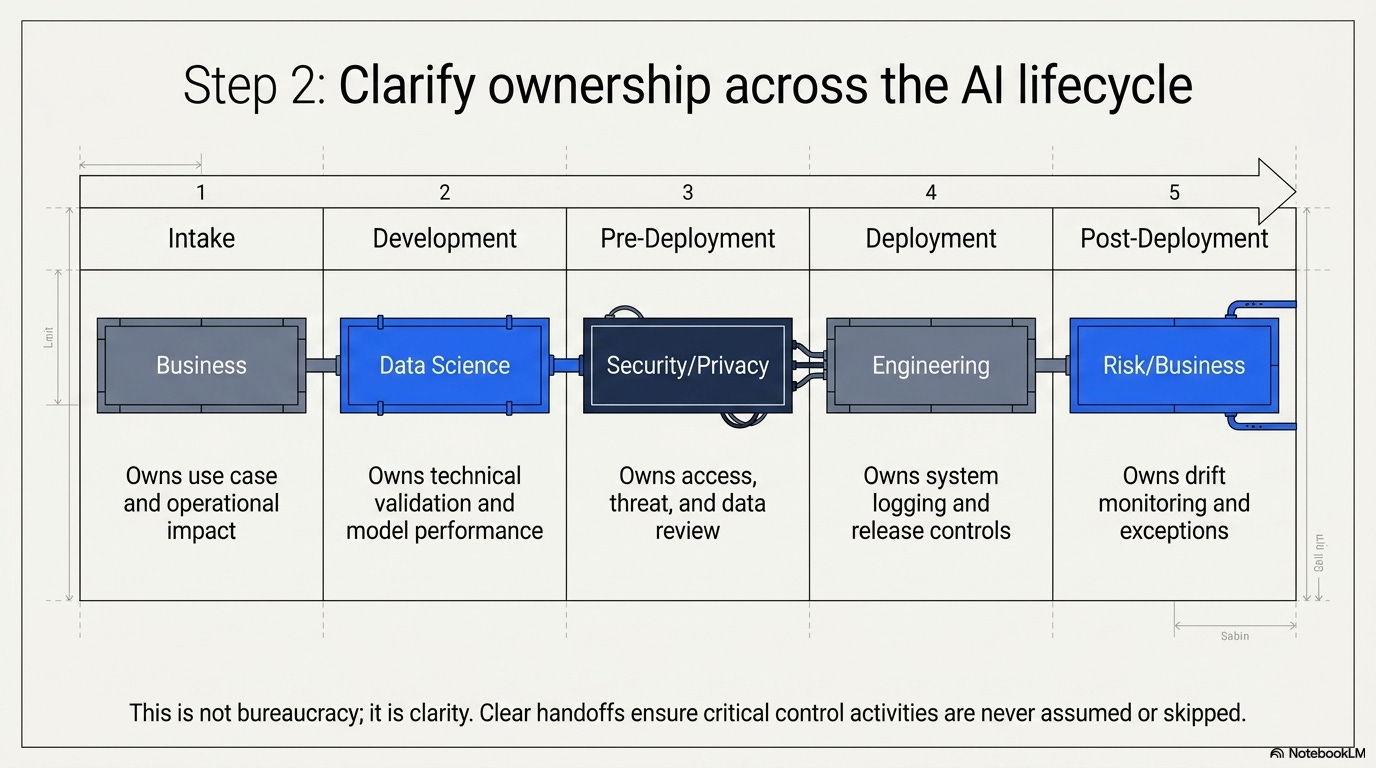

For example, in an AI model change process:

Data science may own technical validation and model performance.

Engineering may own deployment controls and system logging.

Security may review access, threat, and vulnerability concerns.

Privacy or legal may review data use and regulatory exposure.

Risk or compliance may define control expectations and risk acceptance requirements.

The business owner may own intended use and operational impact.

Audit may independently test whether the process works.

This does not need to become bureaucratic. It needs to become clear.

When ownership is unclear, controls fail quietly. Evidence disappears. Exceptions remain open. Monitoring is assumed but not performed. Approvals happen informally. No one notices the weakness until an audit, incident, customer review, or regulatory inquiry forces the question.

Clear ownership is not about assigning blame. It is about making governance sustainable.

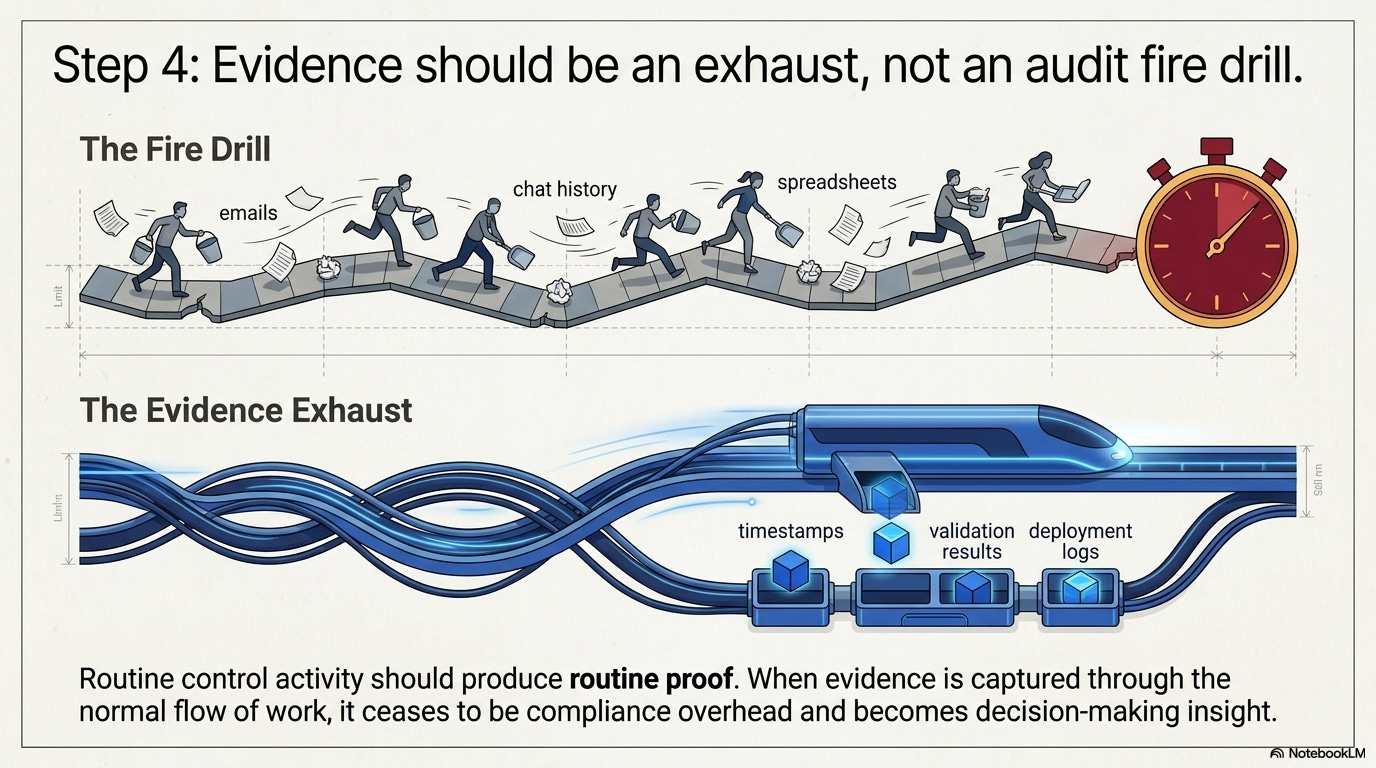

Evidence Should Not Be an Audit Fire Drill

If evidence has to be manually chased every time an audit begins, the control is not mature.

That does not mean every control must be fully automated. Some controls will always require judgment, review, or human approval. But the evidence model should be designed so that routine control activity produces routine proof.

This is one of the most important shifts for modern GRC.

Evidence should not be treated as something created only for auditors. Evidence is proof that the business is operating the way it said it would.

In a weak evidence model, teams rely on screenshots, email searches, manual spreadsheets, chat history, and last-minute explanations. That creates audit fatigue and weak assurance.

In a stronger model, evidence is captured through the normal flow of work: tickets, approval logs, timestamps, version records, validation results, deployment logs, monitoring dashboards, exception registers, and periodic review records.

The audit conversation changes from:

Let’s go find the evidence.

to:

Here is the evidence the process already created.

That is a much stronger position for the organization.

It is also more useful for management. Evidence does not only support compliance. It helps leaders understand whether controls are working, where delays occur, where exceptions are increasing, and where governance may need improvement.

This is where operational GRC becomes valuable beyond audit readiness.

It becomes a source of decision-making insight.

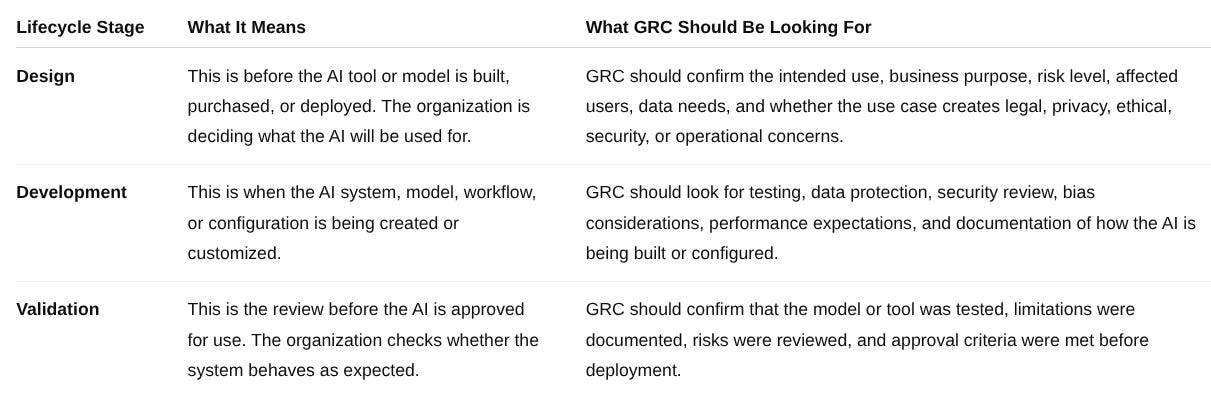

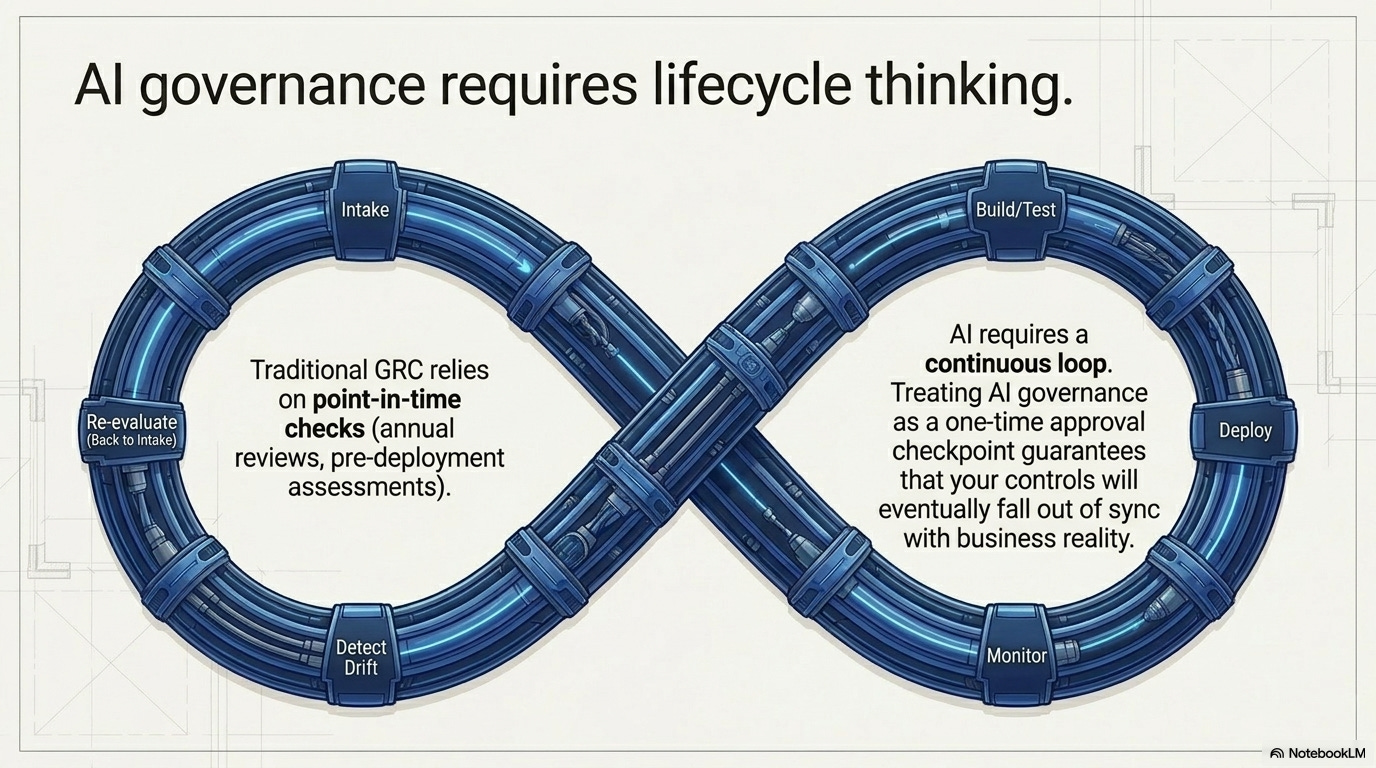

AI Governance Requires Lifecycle Thinking

Many traditional GRC activities are point-in-time.

An annual review. A quarterly access certification. A pre-deployment assessment. A vendor reassessment. An audit request.

Those activities still matter.

But AI requires lifecycle thinking because AI risk does not stop once a tool, model, or feature is approved.

A model can drift after deployment.

A vendor can change AI functionality.

A business process can begin using AI outputs in a way that was not originally intended.

Data inputs can shift.

Users can rely too heavily on automated recommendations.

Performance can degrade.

New regulatory expectations can emerge.

This means AI governance needs to account for the full lifecycle:

The purpose of this lifecycle view is to show that AI governance cannot be reduced to a single approval checkpoint.

A model or AI-enabled tool may be acceptable at the time of deployment, but its risk profile can change as data shifts, users interact with it differently, vendors update functionality, or business reliance increases.

GRC teams need to understand where governance belongs at each stage of the lifecycle, what evidence should be created, and who is accountable for ongoing oversight.

This is how AI governance moves from a policy statement into an operating process.

This table is not meant to create more bureaucracy.

It is meant to make the lifecycle visible.

When organizations treat AI governance as a one-time approval, they miss the fact that the risk can change after approval. The better approach is to define what governance needs to happen at each stage, who owns it, and what evidence should exist when the work is done.

That is how AI governance becomes operational.

What Mature Organizations Do Differently

Organizations that operationalize GRC well are not perfect. They are disciplined.

They understand that policies, frameworks, and control libraries are only the foundation. The real work is building repeatable processes that can operate under pressure.

They tend to do a few things differently.

First, they design controls backwards from evidence. They ask what they would need to show an auditor, regulator, customer, or executive, and then they build the process so that evidence is produced naturally.

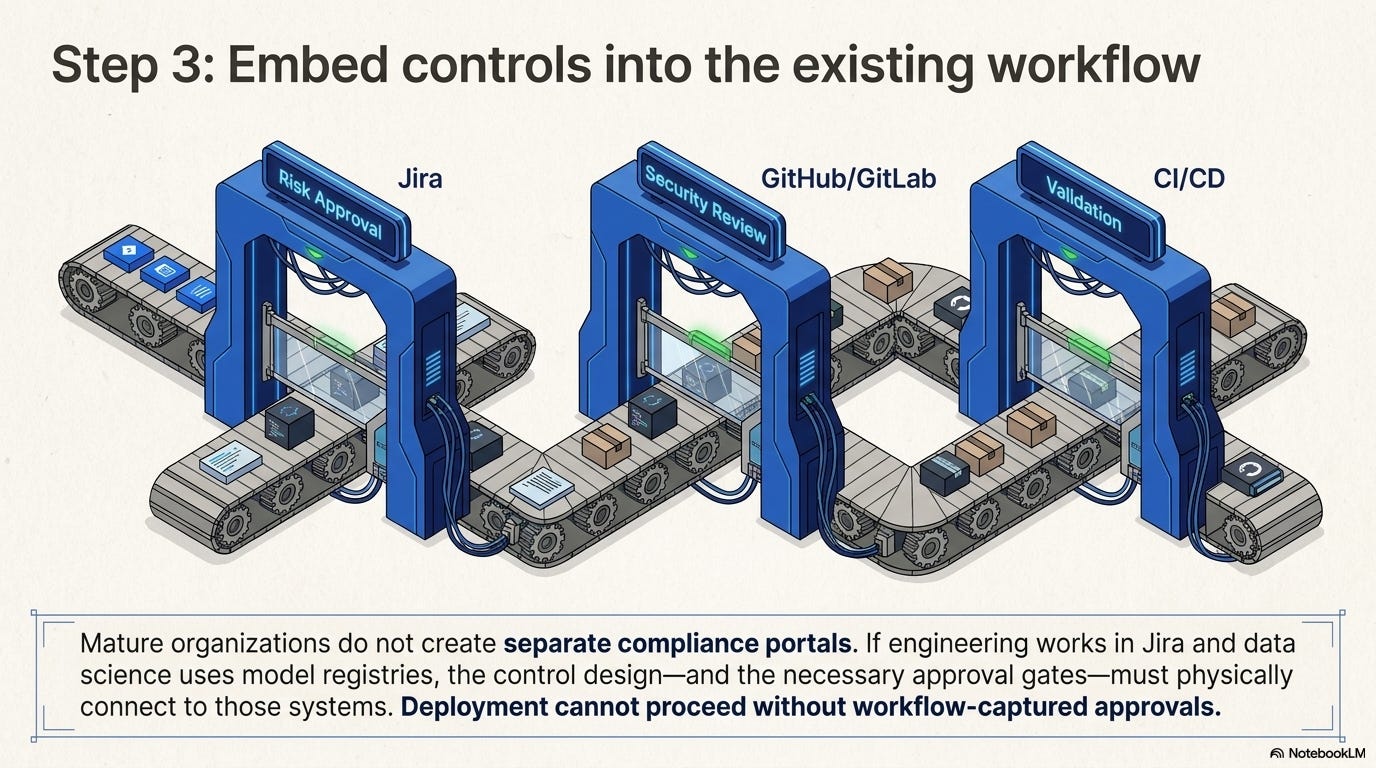

Second, they embed GRC into existing systems instead of creating disconnected side processes. If engineering teams work in Jira, ServiceNow, GitHub, GitLab, or CI/CD tooling, then the control design needs to account for those systems. If data science teams use model registries, experimentation platforms, or deployment pipelines, governance needs to connect there too.

Third, they treat GRC as an operating discipline, not an annual compliance exercise. They review control performance. They track exceptions. They monitor trends. They revisit ownership. They improve workflows over time.

Finally, they accept tradeoffs. This matters. Stronger controls can slow down deployment. Better evidence capture may require tooling changes. Clearer ownership may create uncomfortable conversations. Lifecycle governance may add review steps. But mature organizations do not let those tradeoffs happen accidentally. They make deliberate decisions. That is the difference between governance that supports the business and governance that only appears during audit season.

A Practical Way to Start

The best way to close the execution gap is not to rebuild the entire GRC program at once.

Start with one control that matters.

Choose a control that is visible, important, and currently painful. AI model change management is a strong example, but the same approach can apply to AI tool intake, vendor AI review, exception management, access governance, or cloud control monitoring.

Start by mapping how the process actually works today.

Not how the policy says it works.

How it really works.

Where do requests come from? Where do approvals happen? Who reviews risk? What evidence is created? Where does it live? What happens when the process is skipped? Who knows when an exception is overdue?

Once the current reality is clear, define the minimum viable workflow.

That workflow should answer the basics:

What triggers the control?

Who owns it?

What review is required?

Where does approval happen?

What evidence is created?

How are exceptions handled?

How will the control be tested?

Then test the control like an auditor would.

Ask:

Can we prove this control worked over the last 90 days?

If the answer is no, that is not a reason to panic.

It is useful information.

It means the organization found the execution gap before an auditor, regulator, customer, or incident exposed it.

That is exactly the kind of work modern GRC should be doing.

Real-Life Use Case: When AI Exposes the Gap Between Documented GRC and Operating GRC

Use Case Scenario

A mid-sized SaaS company provides a customer support platform used by healthcare, financial services, and technology clients.

The company has a mature-looking GRC program. It maintains SOC 2 controls, maps policies to NIST and ISO-aligned practices, performs annual vendor reviews, tracks access reviews quarterly, and has a formal change management policy.

On paper, the program appears strong.

The organization has:

A documented change management policy

A formal risk management process

A third-party risk management program

SOC 2 control mappings

Security review procedures

Access review evidence

Audit calendars

Control owners assigned in the GRC platform

From a leadership perspective, GRC appears “covered.”

But then the product team begins integrating AI into the customer support platform.

The AI feature is designed to summarize customer tickets, suggest response language to support agents, and identify recurring customer issues. The business case is strong. The product team expects the feature to reduce support response times, improve customer satisfaction, and help enterprise clients manage service tickets more efficiently.

The problem is not the business idea.

The problem is that the organization’s GRC program was not prepared to govern how the AI feature would actually be developed, approved, deployed, monitored, and evidenced.

What Happened

The AI feature started as a small internal experiment.

A product manager wanted to test whether generative AI could help support agents summarize long customer tickets. The data science team built a prototype using historical support ticket data. Engineering connected the prototype to a staging environment. A few support managers tested it internally and provided positive feedback.

The pilot moved quickly.

No one believed they were bypassing governance. The product team viewed it as an enhancement to an existing platform. Engineering saw it as a feature update. Data science saw it as model experimentation. Support leadership saw it as an efficiency improvement.

But GRC was not formally involved until late in the process.

By the time compliance reviewed the feature, several important questions were still unclear:

Was the AI use case formally approved?

Was customer data used in model testing?

Was data privacy reviewed before the prototype was built?

Were model limitations documented?

Was human oversight defined?

Were validation results retained?

Were model changes tracked?

Was the deployment tied to a formal change request?

Who owned post-deployment monitoring?

What evidence would be available for audit or customer review?

The company had policies that appeared to cover these areas.

But the policies were not fully translated into operating workflows.

That is where the execution gap became visible.

The Control That Looked Good on Paper

The company’s change management policy stated:

“System, application, and model changes must be reviewed, approved, tested, and documented before deployment.”

The control was mapped to the company’s SOC 2 change management control, internal software development standard, and broader risk management framework.

At first glance, this looked sufficient.

The issue was not that the control was missing.

The issue was that the control did not clearly define how AI-related changes should operate inside the business.

The policy did not define:

What counted as a material AI model change

Whether AI prototypes required review before using customer data

Who approved AI use cases before development

What validation evidence needed to be retained

Whether AI-generated outputs required human review

Who owned post-deployment monitoring

How model updates should be linked to change tickets

How exceptions should be documented and approved

The organization had the right control intent. But it had not translated that intent into an enforceable workflow.

This is exactly the problem the blog post addresses: frameworks and policies can define expectations, but they do not execute controls for the organization.

Controls only become real when they operate where the work actually happens.

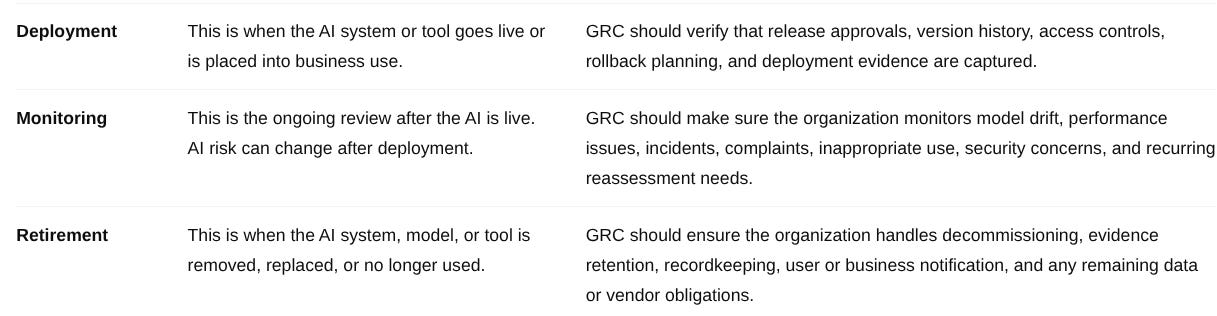

Where the GRC Breakdown Occurred

The breakdown did not happen because one team was careless.

It happened because multiple teams each owned part of the process, but no one owned the full control outcome.

The product team owned the business case.

Data science owned model experimentation.

Engineering owned deployment.

Security reviewed infrastructure risk.

Privacy reviewed customer data concerns.

Compliance owned control requirements.

Audit would eventually test the evidence.

But no single owner was accountable for making sure the AI change control operated from intake through deployment and monitoring.

That created several execution gaps.

The company did not lack GRC documentation.

It lacked operating discipline around the AI lifecycle.

The Audit Trigger

The issue became urgent when a large enterprise customer requested assurance around the new AI feature.

The customer asked:

“Can you provide evidence that AI-related changes are reviewed, approved, tested, monitored, and governed before deployment?”

The company could answer part of the question.

It had a policy.

It had tickets.

It had engineering records.

It had some testing notes.

It had meeting discussions.

It had screenshots.

But it did not have one clean, defensible control record showing the full lifecycle of the AI feature from use case approval to validation, deployment, and monitoring.

The customer’s request exposed the real issue:

The company had GRC artifacts, but not an integrated GRC operating process.

This is where “having GRC covered” started to fall apart.

The Business Impact

The impact was not limited to compliance.

The weak operating model created real business consequences:

The sales team had difficulty responding to customer due diligence questions.

Compliance spent unnecessary time reconstructing evidence.

Engineering had to pause deployment work to clarify approval records.

Privacy had to retroactively review data usage.

Product leadership had to explain the AI governance process to executives.

Audit raised concerns about whether AI-related changes were consistently controlled.

The CISO lacked a clear dashboard showing AI governance status, exceptions, and monitoring ownership.

The organization was not dealing with a catastrophic failure.

It was dealing with something more common: a GRC process that looked mature on paper but became fragile when the business moved quickly.

That is the reality many organizations are facing with AI.

How GRC Helped Fix the Problem

The GRC team did not solve the issue by writing another policy.

That would have only added more documentation.

Instead, the team worked with product, engineering, data science, security, privacy, legal, and audit to turn the AI change control into an operating workflow.

The goal was simple:

Make the control visible, repeatable, enforceable, and evidence-producing.

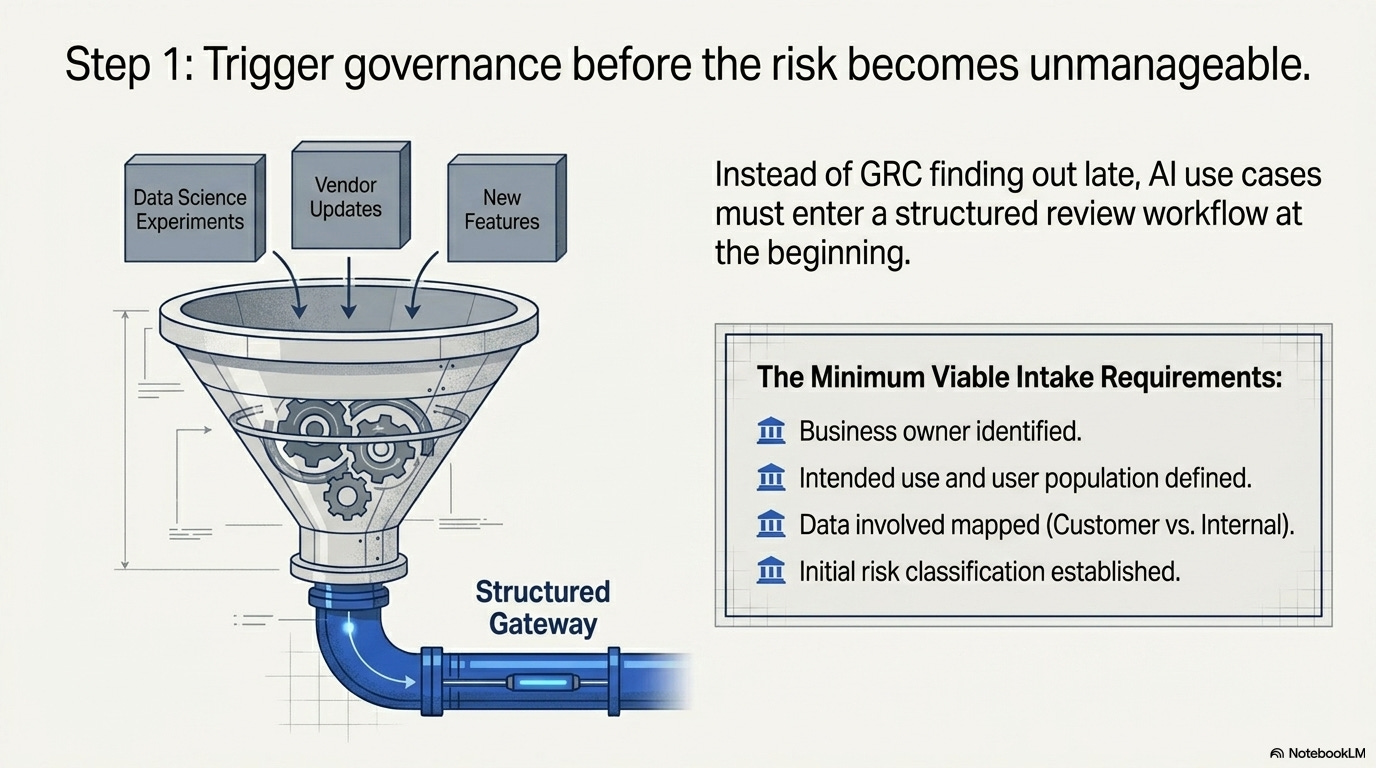

Step 1: Define the AI Use Case Intake Process

The company created a formal AI use case intake workflow.

Any new AI feature, AI-enabled vendor capability, internal AI tool, or material AI model update had to be submitted through a structured intake process before moving forward.

The intake required:

Business owner

Intended use

User population

Data involved

Customer impact

Regulatory or contractual considerations

Vendor involvement, if applicable

Human oversight expectations

Initial risk classification

Required reviewers

This created a clear trigger for governance.

Instead of GRC finding out about AI late in the process, AI use cases entered a review workflow at the beginning.

Step 2: Clarify Ownership Across the AI Lifecycle

The company then defined ownership by lifecycle stage.

This was important because AI governance did not belong to one team.

This did not make the process overly bureaucratic.

It made accountability clear.

Everyone could see who owned each decision, what evidence was required, and where the handoffs occurred.

Step 3: Connect Approval to the Actual Workflow

Before the fix, approvals happened across email, chat, meetings, and technical tickets.

After the fix, the organization required AI-related approvals to be captured in a single workflow record.

The workflow included:

AI use case intake approval

Data usage review

Security review

Privacy review

Model validation evidence

Business risk acceptance

Deployment approval

Exception approval, if applicable

Monitoring owner assignment

Deployment could not proceed unless required approvals were completed.

This changed the control from a policy statement into an enforceable process.

Step 4: Build Evidence Into the Process

The GRC team also redesigned evidence collection.

Instead of manually chasing artifacts before audits or customer reviews, the workflow automatically retained key evidence.

Evidence included:

Intake submission

Risk classification

Reviewer approvals

Model validation results

Data use approval

Security and privacy review notes

Version details

Deployment approval

Monitoring plan

Exception records

Periodic review results

This changed the audit conversation.

Before, the team had to say:

“We need to gather the evidence.”

After the workflow was implemented, the team could say:

“Here is the evidence the process created.”

That is the difference between administrative GRC and operational GRC.

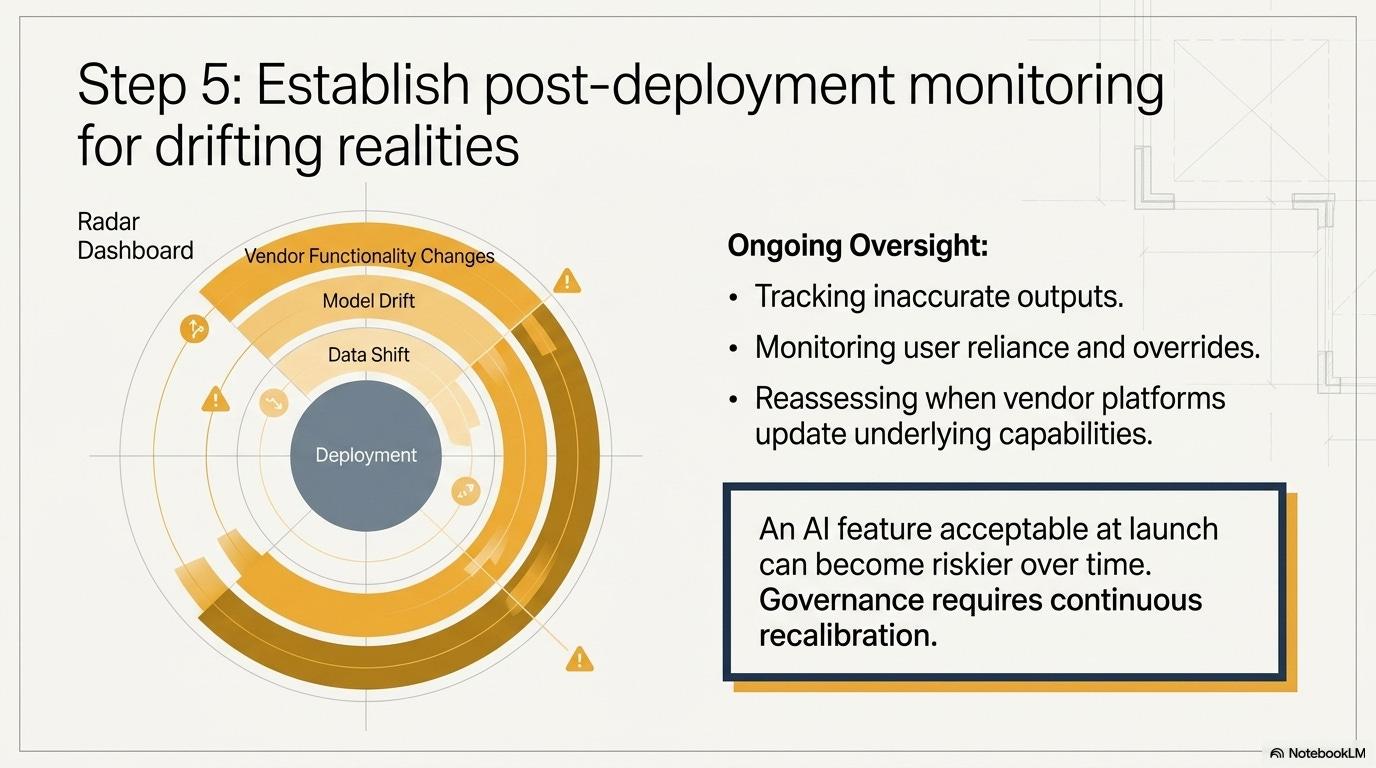

Step 5: Establish Post-Deployment Monitoring

The company also recognized that AI governance could not stop at deployment.

The AI feature needed ongoing oversight.

The monitoring process included:

Monthly review of model performance

Tracking of inaccurate or inappropriate AI-generated suggestions

Review of support agent overrides

Customer complaint monitoring

Drift or degradation review

Security and privacy incident escalation

Quarterly AI control review

Periodic reassessment if the feature’s use changed

This was important because an AI feature that is acceptable at launch may become riskier over time.

Data can shift.

Users can rely on it differently.

The business process can change.

The model can behave unexpectedly.

Vendor or platform updates can introduce new functionality.

That is why AI governance requires lifecycle thinking, not one-time approval.

What Changed After the Fix

After the AI governance workflow was implemented, the organization had a stronger operating model.

The company could now show:

Which AI use cases were approved

Who approved them

What data was reviewed

What validation was performed

What risks were accepted

What controls were required

What evidence was retained

Who owned monitoring

What exceptions existed

Whether the control operated during the review period

This improved more than audit readiness.

It improved business confidence.

Sales could respond to enterprise customer questions faster.

Compliance no longer had to reconstruct evidence manually.

Security and privacy were brought into the process earlier.

Product teams had clearer expectations.

Leadership had better visibility into AI risk.

Audit had a more defensible control trail.

The organization did not eliminate all AI risk.

That is not realistic.

But it created a repeatable governance process that could be explained, tested, and improved.

Key Lessons from the Use Case

This use case highlights several important lessons for GRC teams.

First, a documented control is not enough.

The organization already had change management requirements, risk management expectations, and control mappings. The issue was that those requirements were not fully operationalized for AI.

Second, AI exposes existing GRC weaknesses.

The company’s problems with informal approvals, manual evidence collection, unclear ownership, and fragmented workflows already existed. AI simply made those weaknesses more visible and more urgent.

Third, GRC must be involved before the audit.

Modern GRC cannot only ask for evidence after the fact. It needs to help design workflows that create evidence as part of normal operations.

Fourth, ownership must be explicit.

AI governance touches many teams. Without clear accountability, important control activities will be assumed, delayed, skipped, or poorly evidenced.

Fifth, AI governance must follow the lifecycle.

Approval before deployment is important, but it is not enough. AI features require ongoing monitoring, reassessment, exception management, and evidence retention.

Practical Takeaway for GRC Professionals

If your organization is adopting AI, do not start by asking only:

“Do we have an AI policy?”

Ask:

“Where does the AI governance process actually operate?”

Then look for the control evidence.

Can you show:

The approved AI use case?

The assigned business owner?

The data review?

The model or vendor validation?

The approval trail?

The deployment linkage?

The monitoring owner?

The exception process?

The evidence from the last 90 days?

If the answer is no, the issue is not necessarily that your organization lacks governance.

The issue may be that governance has not been translated into execution.

That is the gap AI is exposing.

And that is where modern GRC professionals can provide real value.

Closing Summary

This use case shows why the question is no longer whether an organization has GRC documentation.

Most organizations do.

The stronger question is whether the organization can prove that GRC is working inside the business.

In this scenario, the SaaS company had policies, frameworks, controls, and audit processes. But when AI entered the environment, the organization discovered that its controls were not fully embedded into workflows, ownership was unclear, and evidence was too dependent on manual reconstruction.

By turning AI governance into an operating process, the company moved from documented GRC to operational GRC.

That is the real maturity shift.

Not more paperwork.

Better execution.

Conclusion: The Real Test of GRC Is Whether It Works When the Business Moves

The lesson from this article and use case is straightforward:

Most organizations do not lack GRC documentation.

They lack consistent GRC execution.

That distinction matters.

A company can have strong policies, mapped controls, recognized frameworks, assigned owners, completed assessments, audit calendars, and evidence repositories — and still struggle when asked to prove how a control actually operated in the real business environment.

That is what AI is exposing.

AI is not creating every GRC weakness from scratch. In many cases, it is revealing weaknesses that were already present:

Informal approvals

Unclear ownership

Manual evidence collection

Disconnected workflows

Weak exception management

Point-in-time reviews

Limited post-deployment monitoring

Controls that exist in documentation but not in day-to-day operations

The SaaS use case makes this tangible.

The company had a mature-looking GRC program. It had change management requirements, security procedures, SOC 2 controls, vendor risk processes, and audit evidence. But when the product team introduced an AI-enabled customer support feature, the organization discovered that its controls were not fully prepared to govern how the AI feature was developed, approved, deployed, monitored, and evidenced.

The problem was not the AI feature itself.

The problem was that governance had not been embedded into the workflow early enough.

The AI use case was not formally reviewed at intake. Customer data use was not fully reviewed before experimentation. Model validation evidence was fragmented.

Deployment activity was not cleanly tied to approval records. Monitoring ownership was unclear. Compliance had to reconstruct evidence after the work had already happened.

That is not operational GRC.

That is documentation trying to catch up with reality.

The fix was not to write another policy and hope the business followed it.

The fix was to translate the control into an operating process.

That meant defining an AI use case intake workflow, clarifying ownership across the AI lifecycle, connecting approvals to actual deployment activity, building evidence into the process, and establishing post-deployment monitoring.

That is the maturity shift modern GRC teams need to make.

Not more paperwork.

Better execution.

For GRC professionals, this is where the role becomes more strategic. The value of GRC is not only in interpreting frameworks or preparing for audits. The value is in helping the organization turn governance expectations into repeatable business processes that can be tested, evidenced, and improved.

That means asking harder operational questions:

Where does this control actually happen?

Who owns the decision?

What system captures the approval?

What evidence is created by the workflow?

What happens when the process is skipped?

How are exceptions approved and reviewed?

Who monitors the control after deployment?

Can we prove the control worked over the last 90 days?

These questions are especially important for AI governance because AI risk does not stop at launch. A model can drift. Data can shift. Vendor functionality can change. Users can rely on outputs in unexpected ways. Business reliance can increase. Regulatory expectations can evolve.

A one-time approval is not enough.

AI governance requires lifecycle thinking.

That does not mean every organization needs an overly complex governance model. In fact, many organizations should start smaller. Pick one critical AI-related control, such as AI tool intake, model change management, vendor AI review, or post-deployment monitoring. Map how the process actually works today. Identify where evidence is weak. Clarify ownership. Define the minimum viable workflow. Then test whether the control can be proven over the last 90 days.

That is practical GRC.

The organizations that will be better positioned in the AI era are not necessarily the ones with the longest policies or the most impressive control matrices. They will be the ones that can show:

Which AI use cases are approved

Who owns them

What risks were reviewed

What data was evaluated

What validation occurred

What exceptions exist

What monitoring is active

What evidence proves the control operated

How governance adapts when the technology changes

That is what separates documented GRC from operational GRC.

In the past, having a strong framework may have been enough to demonstrate maturity.

Today, it is only the starting point.

The real question is no longer:

Do we have GRC covered?

The better question is:

Can we prove GRC is actually working?

That is not just an audit question.

It is a business resilience question.

It is a customer trust question.

It is a leadership visibility question.

It is a cybersecurity and risk management question.

And as AI becomes more embedded into business processes, vendor platforms, software development, customer interactions, analytics, and decision support, it will become one of the clearest measures of whether a GRC program is truly mature.

Because modern GRC is not proven by what is written down.

It is proven by what operates.

Need to share these insights with your leadership or team? 📊

It is one thing to understand the execution gap—it is another to convince your organization to change how it manages risk. To help you drive this conversation internally, we’ve put together a companion slide deck: Operational AI Governance.

This deck distills the core takeaways from this post into a presentation-ready format. It highlights exactly why frameworks do not execute controls, how AI turns weak execution into visible risk, and the practical steps needed to bridge the gap.

Whether you are presenting to the board, your engineering leads, or your risk committee, use these slides to show how your organization can shift from administrative documentation to true operational GRC—where workflows are enforceable and evidence is built directly into the process

Prefer to watch rather than read? 🎬

A documented control is not always an operating control. When it comes to AI, the gap between what your policy says and how your technical teams actually work is expanding fast.

To help visualize this challenge, we created a short explainer video: The AI Execution Gap.

In just a few minutes, this video breaks down exactly why traditional frameworks fail to execute controls, how fast-moving AI models turn weak execution into highly visible risk, and the shift required to build true operational governance.

Whether you are an auditor, a security leader, or a GRC practitioner, watch the explainer below to see why the future of compliance means moving past the paperwork to ensure controls operate where the work actually happens.

References

National Institute of Standards and Technology. Artificial Intelligence Risk Management Framework (AI RMF 1.0). NIST AI 100-1, January 2023.

National Institute of Standards and Technology. NIST AI Risk Management Framework Playbook.

National Institute of Standards and Technology. The NIST Cybersecurity Framework (CSF) 2.0. February 2024.

National Institute of Standards and Technology. Security and Privacy Controls for Information Systems and Organizations, NIST Special Publication 800-53 Revision 5.

National Institute of Standards and Technology. Secure Software Development Framework (SSDF) Version 1.1, NIST Special Publication 800-218. February 2022.

International Organization for Standardization. ISO/IEC 42001:2023 — Artificial Intelligence Management System.

European Union. Regulation (EU) 2024/1689, Artificial Intelligence Act. See Article 9 on risk management systems and Article 72 on post-market monitoring for high-risk AI systems.

National Institute of Standards and Technology. AI RMF Core: Govern, Map, Measure, Manage. NIST AI Resource Center.